[ad_1]

A team of researchers from MIT, The Hong Kong University of Science and Technology, and ETH Zurich, has developed a mind-blowing tool to not only create a digital twin of physical objects around you, but also make them function like the real thing in mixed reality (MR). Whether it’s a doll with limbs that move a certain way, or a gadget that plays video and music, the InteRecon tech can recreate all that – virtually.

The researchers are proposing this as a better way to preserve the memories of your beloved toys and other personal items, as well as to help present objects for closer examination to museum visitors.

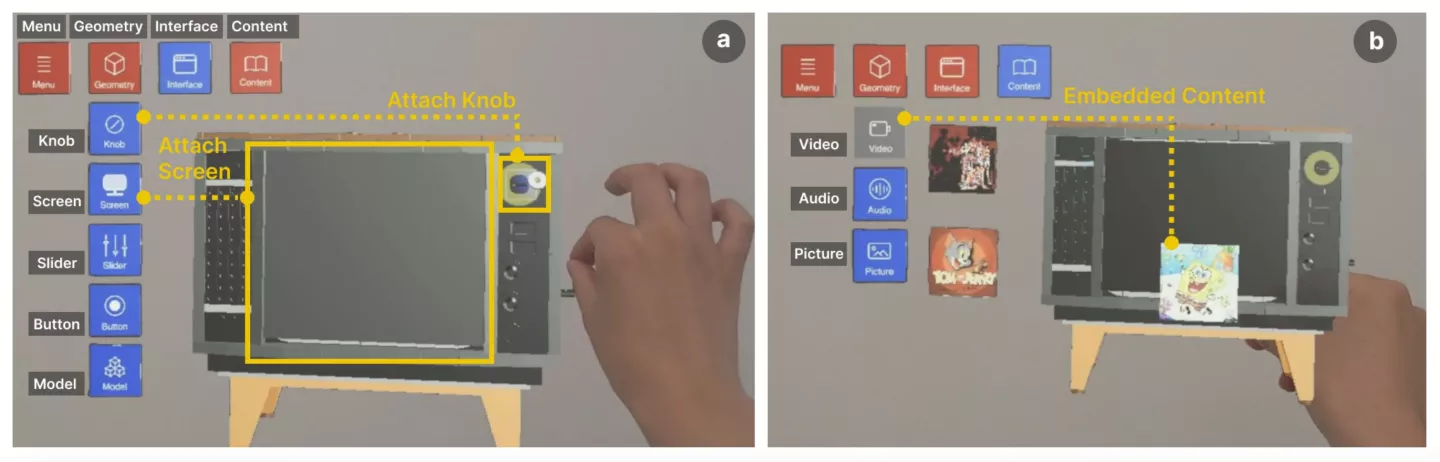

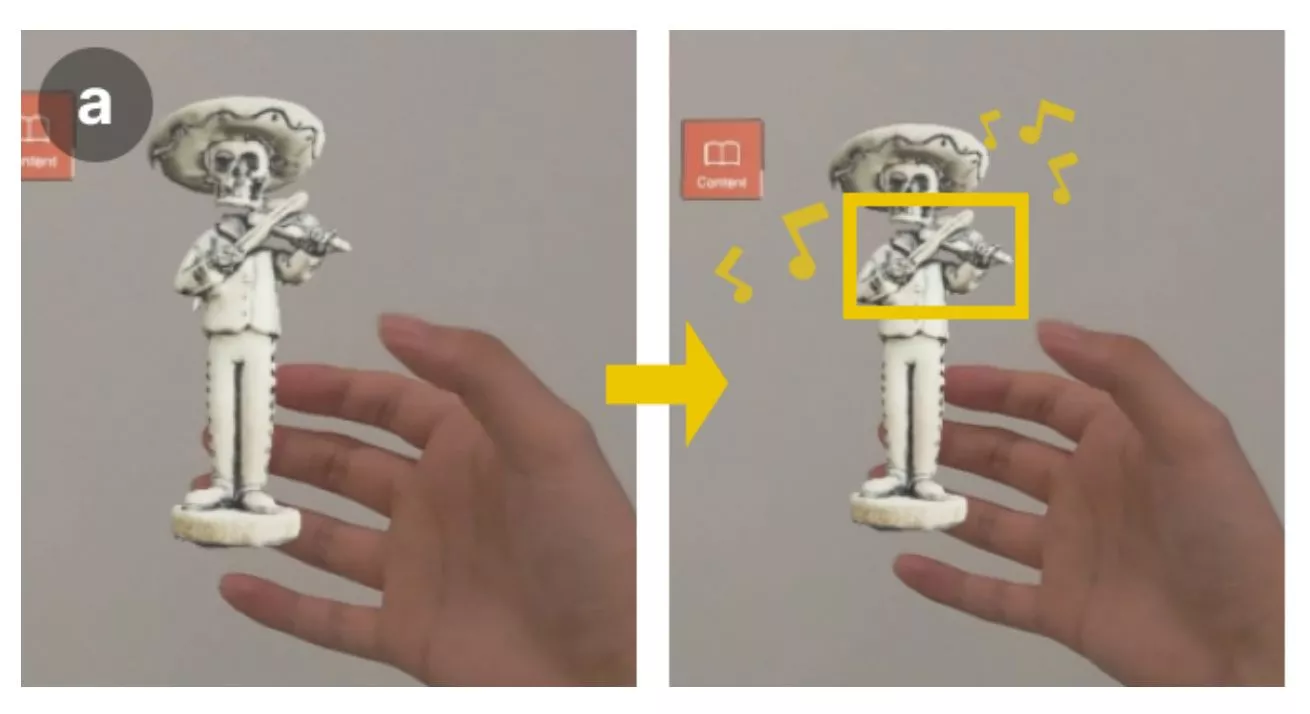

The team’s InteRecon tech – short for Interactive Reconstruction – for creating an Interactive Digital Item (IDI) involves four steps: reconstructing its 3D appearance, adding physical transforms (controls that specify how a distinct part of the 3D model can function or be interacted with) to make it work like the real-life version, reconstructing the object’s interface with buttons and knobs and sliders that actually work in MR, and adding embedded content like videos and music that were part of the original object.

Images provided by the research team

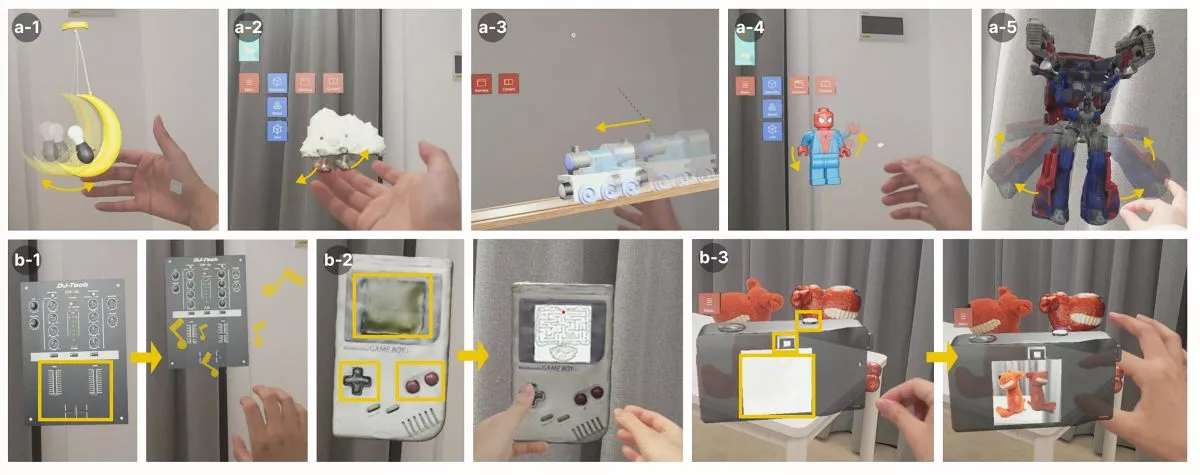

It works using an iPhone app that will have you first scan the object and turn it into a 3D model. Next, you’ll import it into the InteRecon MR interface that you can access using a headset like the Meta Quest. Its virtual controls will let you segment the scanned 3D object into distinct parts, and specify how they work using demonstrative tools.

For example, let’s say you had a bunny rabbit stuffed toy as a kid. You could scan it, segment the long ears as separate parts with programmable motion, flip through a bunch of motion options for those parts, and find the one that lets these ears flop freely when you interact with the toy. Similarly, you could segment its legs, and enable them to move with a certain range of motion similar to the actual toy rabbit.

Images provided by the research team

Okay, that’s plenty cool on its own, and far beyond what you can do with current 3D scanning tech. What’s particularly noteworthy is how InteRecon works with electronic items. Watch the video below to see how the team brings an old iPod Shuffle to life in MR, complete with functioning buttons and music that plays from it.

InteRecon: Towards Reconstructing Interactivity of PersonalMemorable Items in Mixed Reality

The researchers also demonstrate how you could replicate a toy TV set that played a selection of preset cartoon shows. The tool lets you simulate the way the knobs work to flip between clips, indicate the area on the toy where the screen would go, and upload videos to display in that screen area. It’s really inventive, and the demo makes the digitization process look fairly simple and intuitive.

This tech could go beyond digitizing toys: as some users in a related study noted, it could allow fashion designers to experiment with different materials in MR, and perhaps even help teach medical students how to perform challenging surgeries.

Images provided by the research team

To that end, the researchers intend to upgrade InteRecon’s physical simulation engine to support higher precision in the way the IDIs work, and extend its capabilities to recreate entire physical environments you can interact with. They also plan to explore the use of generative AI models to recreate lost personal items by simply describing them out loud, and even 3D print the results.

The team will present its work at this year’s ACM CHI conference in Yokohama, Japan, starting later this month.

[ad_2]